Five Open-Source Tools That Quietly Murdered the SaaS AI Subscription

The $19 Monthly Tax Nobody Questioned

For years, developers paid GitHub Copilot $19 a month and called it the cost of doing business. The price felt reasonable. The value was obvious—AI-assisted code completion, inline suggestions, chat built into the world's most popular IDE. What wasn't to like?

Nothing, except that the value was never in GitHub's implementation. It was in the underlying model. And the underlying model can now run locally, on your hardware, with no API key, no monthly invoice, and no rate limits.

This isn't speculation. VS Code v0.18.3 ships native Ollama integration directly into GitHub Copilot. If you have Ollama installed, any local model is selectable in Copilot's model menu. The feature that cost $19 a month is now a configuration option inside an IDE you've already installed.

This is the moment the SaaS AI model started unraveling. Not with an announcement or a competitor launch—with a settings menu.

---

The Full Stack Is a Docker Compose Away

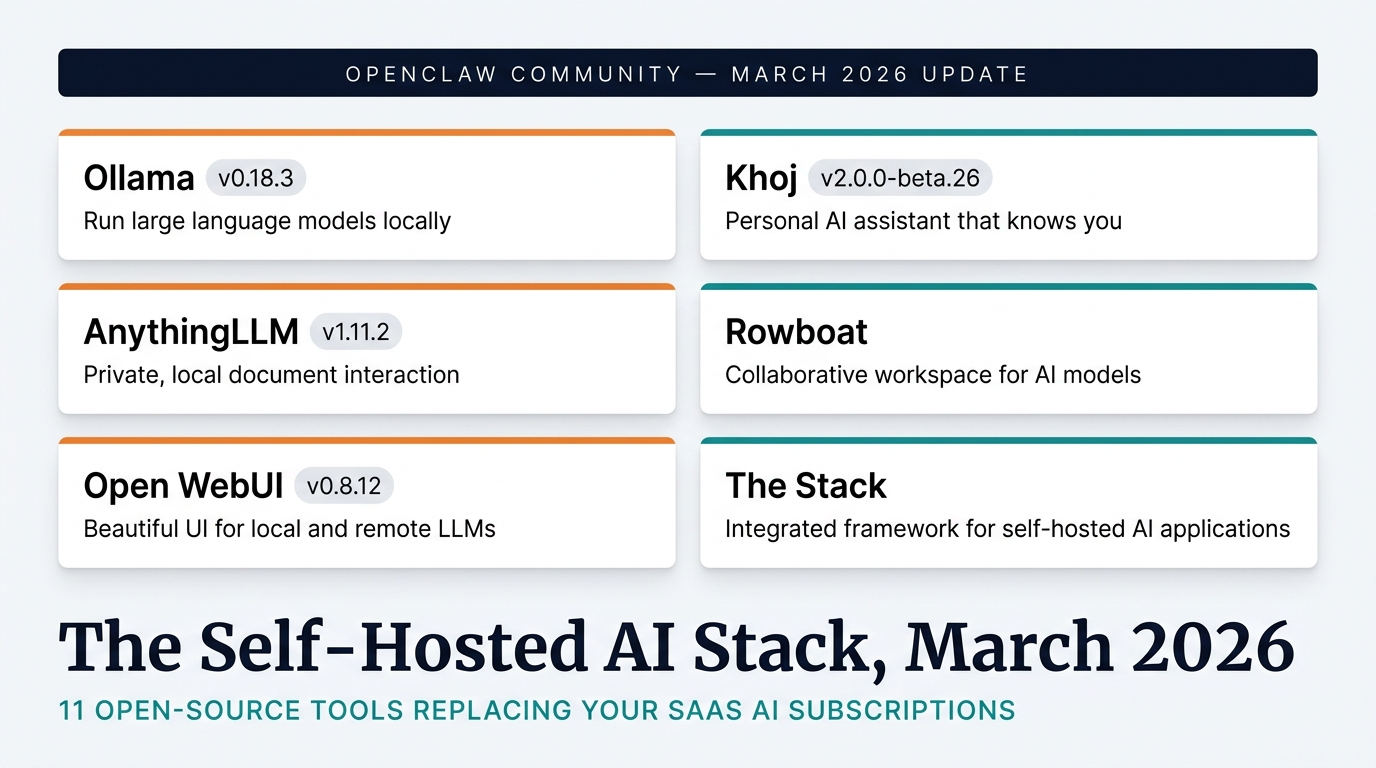

The Copilot integration is the loudest signal, but it's not alone. The self-hosted AI ecosystem has assembled a complete alternative to the SaaS stack most teams rely on, and it's been hiding in plain sight on GitHub's own platform.

AnythingLLM v1.11.2 replaced your meeting transcription suite in one release. Granola, Otter, Fireflies—tools charging $10 to $16 per month per seat for meeting recording and transcription—are now functions of a single MIT-licensed desktop application. The March release added native tool calling across 20+ merged pull requests, Perplexity Search API integration, and AMD GPU optimization. Speaker identification, on-device processing, zero usage caps.

Rowboat replaced Notion's custom agents the same week Notion launched them as a SaaS-only product. Eight pre-releases in five days—March 24 through 28. Auto-registration, V8 memory fixes, draft email options, MCP protocol support, knowledge graph memory persisting context across conversations. MIT-licensed. 500+ integrations. The open-source community didn't just respond to Notion's lock-in strategy—they responded faster than Notion could patch their own roadmap.

Khoj v2.0.0-beta.26 handles the research agent category that SaaS vendors are charging premium prices for. Parallel tool calling for simultaneous research workflows. Obsidian sync for knowledge workers already living in their local markdown files. 40+ knowledge source connectors. The project announced cloud feature deprecation to commit entirely to self-hosting. When a team kills their own cloud product to focus on users running their own infrastructure, they're making a statement about where they think the market is heading.

Open WebUI v0.8.12 gives you the ChatGPT interface experience without the OpenAI invoice. BSD-3-Clause licensed. Production-stable. Security patches applied.

Together, these five tools—Ollama, AnythingLLM, Rowboat, Khoj, and Open WebUI—replace $65 per user per month in SaaS subscriptions with a one-time hardware investment that pays for itself in under four months.

---

The Math Nobody Is Running (Until They Do)

Let's be precise about what self-hosted AI infrastructure actually costs versus the alternative.

The average knowledge worker at a software company pays for at least three AI SaaS products: GitHub Copilot for coding, a meeting transcription tool, and an AI-assisted workspace like Notion. Even conservative estimates put this at $45 to $65 per user per month, depending on which specific tools are in the stack.

Scale that to a 15-person engineering team: $8,100 to $11,700 annually, recurring, with no asset to show for it.

Now run the self-hosted calculation. A workstation with 96GB VRAM—adequate for running Nemotron-3-Super, the 122B model that currently tops the PinchBench agentic benchmark—costs between $3,000 and $5,000. Ollama runs on it. VS Code connects to it natively. The team's meeting transcription runs on AnythingLLM Desktop. Everything stays local.

Hardware payback period: under four months. After that, the infrastructure cost drops to electricity and maintenance. The team can triple in size without changing the AI budget.

This math isn't theoretical. Teams are running it. The r/selfhosted community—growing faster than any other technical subreddit as of 2026—has turned AI agent self-hosting from a niche interest into one of the most active discussion categories on the platform. The people sharing their migration stories aren't ideologues. They're operators who ran the numbers and couldn't justify the SaaS invoices anymore.

---

The Compliance Conversation Enterprises Can't Avoid

For a meaningful segment of the market, the economics are almost secondary to the legal reality.

Healthcare organizations handling patient data face HIPAA requirements that make routing PHI to third-party AI APIs a compliance liability requiring documented frameworks, business associate agreements, and audit exposure. Financial services firms are navigating data residency rules that create friction around cloud-hosted AI processing. Legal teams operate under attorney-client privilege that external data processing potentially compromises. European companies face GDPR's explicit requirements around data processing location and consent.

For these organizations, the question isn't "should we self-host?" The question is "can we make self-hosted work well enough to satisfy our compliance obligations?"

The March 2026 release cycle answers that question definitively: yes, it can.

Self-hosted AI infrastructure means data sovereignty by architecture, not by policy. The bits never leave the organization's hardware. There is no third-party API call to audit, no vendor relationship to assess for compliance risk, no data processing agreement to negotiate. The compliance overhead that regulated industries pay to use SaaS AI disappears entirely when the infrastructure runs on-premises.

This isn't a soft benefit. For organizations in healthcare, finance, legal, or any sector with strict data governance requirements, self-hosted AI isn't a preference—it's a prerequisite. And until recently, that prerequisite came with a significant capability tradeoff. That tradeoff is gone.

---

The Migration Nobody Announced (But Everyone Is Making)

SaaS vendors don't announce when customers leave. The cancellation email goes to a support inbox, gets processed, and disappears into churn metrics that companies don't publicize. There's no press release for the team that quietly migrated to Ollama and never looked back.

But the signals are accumulating.

Rowboat's development velocity outpacing Notion's own agent development. Khoj killing its cloud tier to focus entirely on self-hosting users. r/selfhosted's most upvoted posts consistently featuring AI agent implementations. GitHub Copilot's growth metrics facing their first serious competition from an open-source alternative that costs nothing to operate.

The transition is happening the way most market shifts happen: incrementally, then suddenly. Teams don't migrate all at once. They migrate one tool at a time—starting with whichever subscription feels most replaceable, building confidence, running the numbers, then tackling the next piece of the stack.

The teams making this migration today aren't the adventurous edge cases. They're the early majority—pragmatic operators who evaluated the options, tested the tools, and concluded that their SaaS AI invoices were a choice they didn't need to keep making.

---

Your Next Step Is Simpler Than You Think

If you've read this far, you've already done the hardest part: you've acknowledged that the math exists.

The actual migration is less daunting than it sounds. You don't need to migrate everything at once. You don't need to decommission every SaaS subscription immediately. You need to start with one tool, run it in parallel, and evaluate whether it meets your needs.

Start with Ollama on a local machine. Install it. Run a model. Connect it to VS Code. Use it for a week. Compare the output to what you're paying Copilot $19 a month for.

If it works—and the evidence suggests it will—expand from there. Add AnythingLLM for meeting transcription. Explore Rowboat for workflow automation. Build from the foundation up, one tool at a time, with full cost transparency at each step.

The SaaS AI subscription model isn't going away tomorrow. But the teams that understand what's now possible with self-hosted infrastructure have a structural advantage: they're paying for infrastructure they own, with data they control, on a stack that scales without billing surprises.

That advantage compounds over time. The earlier you start, the larger it gets.

---

Explore the tools: Ollama for model inference. AnythingLLM for meeting transcription and workspace AI. Rowboat for multi-tool agents with 500+ integrations. Khoj for deep research. Open WebUI for ChatGPT-style interface. Full ecosystem at awesome-selfhosted GenAI section.

Sources:

- Ollama v0.18.3 Release Notes - AnythingLLM v1.11.2 Release - Rowboat Active Development Releases - Open WebUI v0.8.12 Release - Khoj v2.0.0-beta.26 Release - Reddit r/selfhosted - Hacker News: Self-Hosted AI Discussion