Google's AI Has Been Redesigning Its Own Hardware for a Year

TL;DR

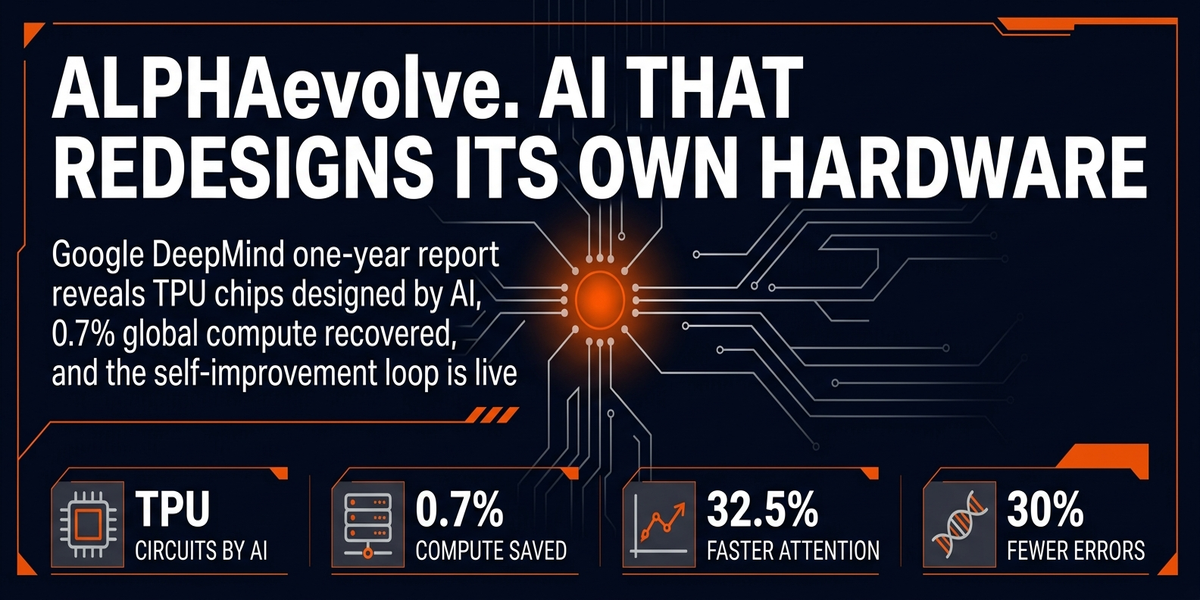

- AlphaEvolve shaved 0.7% off Google's global compute bill. Sounds tiny. At their scale that's thousands of servers and eight figures a year. - Next-gen TPU chips contain logic AlphaEvolve dreamed up. Engineers called it weird. Metrics said ship it. - Dual-model loop: one model throws out thousands of variants, another scores them, winners iterate. - OpenEvolve is live on GitHub. You don't need Google's infrastructure to run the same loop on your own problems.

---

Here's what 0.7% means at scale. Google. Thousands of servers. 24/7. Idle or duplicating work they shouldn't be doing. One overlooked scheduler fix, found by an AI, running in production for over a year. FlashAttention got 32.5% faster too. On a kernel that already had expert human tuning. That's not a rounding error.

That's the difference between a budget that works and one that doesn't.

I keep coming back to that number. 0.7%.

Sounds like nothing. It's the energy bill for a small data center. It's hardware that should've been retired three years ago still drawing power because nobody noticed. Human engineers walked past this fix for years. AlphaEvolve found it in week one.

The Loop Behind It

Here's the part nobody talks about enough.

AlphaEvolve isn't a model. It's a feedback system.

Gemini Flash generates fast and loose. Thousands of mutations, low cost, no filter. Gemini Pro sits there evaluating each one. Does this actually run faster. Use less memory. Pull less power. Winners feed back in. Next cycle starts from the top, not from zero.

Most AI tools are one-shot. Prompt in, output out, done. AlphaEvolve treats quality as a slope. Over enough iterations the slope finds peaks human code never reaches. Not since the AI is smarter. Given that it doesn't get tired. Doesn't get bored.

Doesn't have a favorite approach it won't let go of.

The power grid result still gets me. AC Optimal Power Flow. Feasibility went from 14% to 88% in one shot. Human engineers had been stuck at 14% for years. That's the gap.

That's what iteration looks like when you're not the one doing it.

The Circuits Got Shipped

Jeff Dean confirmed it.

AlphaEvolve-designed circuits ended up in next-gen TPU silicon. Engineers looked at the layouts and said no way. Too weird. Violated conventions they'd spent years building. But the metrics. Power draw. Signal integrity. Gate count. All better.

So they shipped it.

Side note: this is the part where safety people start asking harder questions. The AI that trains on TPUs is now redesigning TPUs. New chips train the next model. That model designs the next chip.

The loop is closed and it's not a thought experiment anymore.

Jack Clark at Anthropic puts it at 60%+ odds that an AI trains its own successor by end of 2028.

Nathan Lambert from Allen Institute pushes back. Lossy self-improvement, he calls it. Flywheel slows as things get complex. Both probably right. AlphaEvolve doesn't care about the debate. It's just running.

Your Cloud Bill Has the Same Problem

I know, I know. Google-scale compute sounds like an enterprise problem. It isn't.

Your biggest line item is compute.

AWS, GCP, whatever you're running. Database queries. CI pipelines. Most teams look at that bill once a quarter, wince, move on. They never go back and ask if there's another 15% hiding in the scheduling layer.

Generate-score-iterate. That's the whole playbook. You don't need a TPU cluster. You need a metrics dashboard and the willingness to let an AI try things a human wouldn't try.

I've run this on ad spend.

One campaign structure, AI scores conversion rate per dollar instead of impressions. Iteration three beat iteration one by 15-20%. Not as the AI knows your business better. Since it tries combinations you'd never bother with and doesn't fall in love with the first thing that sort of worked.

Your biggest line item has waste in it. Guaranteed. The question is whether you've looked with the right tool.

OpenEvolve Is Already on GitHub

If you wanna poke at this yourself, it's out there. OpenEvolve. The dual-model loop, the evolutionary scoring, all of it. Clone it.

Hook up a Gemini API key and a metrics endpoint.

You can run it on pricing models. Ad copy variants. Routing logic. CI caching. Database query patterns. Doesn't matter the domain. The structure is the same: generate, score, keep winners, feed back, repeat. That's the machine. Most people are still using AI to write one-off outputs. The teams that win are running loops that get better every cycle.

The thing that made human engineers valuable was finding what's broken and fixing it.

AlphaEvolve does that now. Faster than any human process would tolerate. It doesn't get tired. Doesn't have ego tied up in the approach. Just keeps iterating until the metrics say stop.

Your move.

---

Sources: - Google DeepMind Blog. AlphaEvolve Impact Report - HN Thread, 294 points - Byteiota — AlphaEvolve Coverage - Winbuzzer — Gemini-Powered AlphaEvolve

Comments ()