New Post

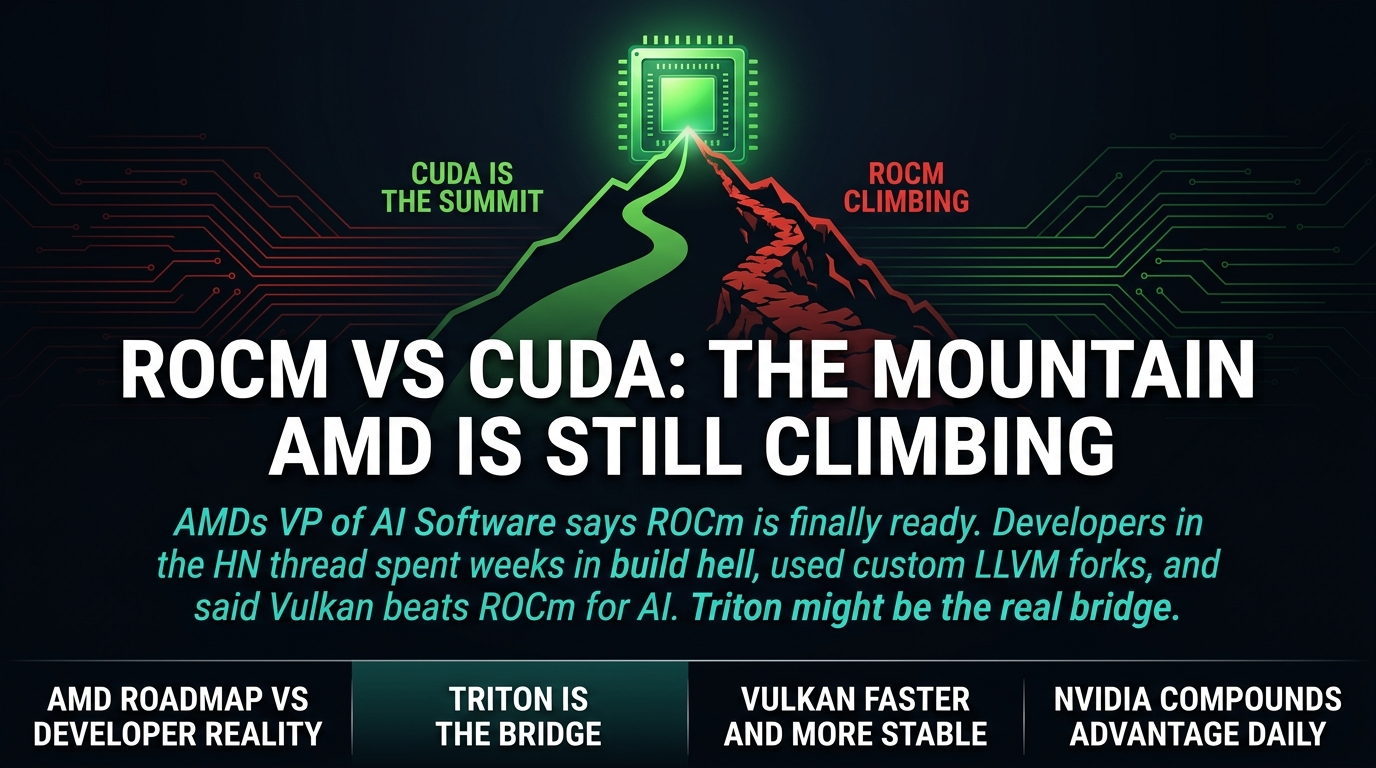

AMD says ROCm is finally ready. The developers who tried it are not so sure.

EE Times ran an interview with AMD's VP of AI Software this week. He made a coherent case for ROCm as a real CUDA challenger. The headline quote: "It's like climbing a mountain — one step in front of another." AMD has been rebuilding its software stack for 2.5 years. They invested in Triton. They released every six weeks. The pitch sounds solid.

The HN thread hit 213 points with 161 comments. The comments are brutal. One developer spent weeks trying to get tuned Tensile kernels shipped for a GPU used by Ollama and could not find a single person at AMD who owned the issue. Another spent a week building 30-plus dependencies including a custom LLVM fork just to get ROCm working. "It has been a bit of a nightmare," they said.

That gap between the roadmap and the build system is the story.

Here's the thing: AMD's case is not wrong. The technical investments are real. A unified OneROCm stack exists. Triton and MLIR are legitimate investments. The team came from Google with a "just works" product philosophy. If you are charitable, AMD is genuinely climbing the mountain.

But charity does not compile your code.

One developer in the thread described trying to get a tuned kernel shipped and hitting a wall. Not a technical wall. An organizational one. Nobody at AMD owned the issue. Nobody could point them to the right person. That is not a build system problem. That is a company that has not figured out how to support the developers it is trying to recruit.

Another developer described the build process in detail. Thirty-plus dependencies. A custom LLVM fork. Weeks of work. They got it working eventually, but their conclusion was instructive. They said they got ROCm built but "would not say it was worth the administrative overhead."

This is the pattern you see over and over in the thread. AMD can say all the right things in an interview. The question is whether the organizational issues and build system mess get fixed faster than NVIDIA's ecosystem advantage compounds. The developers in that thread are not holding their breath.

It gets worse. Multiple developers said the same thing independently in that thread, and it sounds like a joke until you think about it. Vulkan plus llama.cpp was sufficient for their needs. Vulkan. The graphics API. It is faster, more stable, and "just works" for AI inference while ROCm required weeks of痛苦.

Let that sink in.

Vulkan predates ROCm by years. ROCm was built specifically for AI. Vulkan is a graphics API that happens to run inference workloads. And Vulkan is winning on developer experience. That tells you everything about where AMD's software priorities have been — and why the "one step at a time" narrative might be too generous.

But wait: there is a real shift happening that AMD is actually part of, and it might matter more than the build system problems.

The real story is Triton. When most developers work above the kernel layer using frameworks like llama.cpp, the CUDA versus HIP distinction becomes irrelevant. You write once in Triton, you run on AMD or NVIDIA without modification. AMD invested heavily in the Triton team. OpenAI built it. It is becoming the abstraction layer that makes GPU programming portable.

If this holds — and it is still early — NVIDIA's CUDA moat is eroding from above, not below. That is a faster path to commoditization than AMD overtaking NVIDIA at the kernel level. AMD knows this, which is why they put real money into Triton instead of just pushing HIP compatibility.

So the question is not whether ROCm is improving. It is. The question is whether the Triton layer gets good enough fast enough that the CUDA lock-in story becomes irrelevant before AMD closes the developer experience gap on its own stack.

The real kicker: Vulkan developers in that same thread said they do not care about CUDA versus ROCm at all. They found a path that works and they are staying on it. That is the competition AMD is actually losing to — not NVIDIA, but the open source inference stack that does not require buying into either ecosystem.

If you are evaluating GPU options for AI work right now, here is what to actually consider. Do not take the vendor word for it. Check the HN comments on anything AMD ships. Look at how long it takes to get a working build in a realistic environment. Talk to developers who tried and gave up. The roadmap is not the product. The build system is.

AMD is climbing the mountain. NVIDIA is not standing still. And the developers who burned weeks on ROCm are running Vulkan and llama.cpp instead, and they are not coming back until the experience improves.

Check the comments before you commit to any stack.

Sources: - EE Times — Taking on CUDA with ROCm - Hacker News Discussion