Opus 4.7 Is Burning Through Your Rate Limits Faster Than You Think

A community data analysis showing Opus 4.7 consumes roughly 45% more tokens than 4.6 on identical tasks hit 379 points on HN today. The headline is real. But the story is more complicated than the number suggests, and for agency operators running production workloads, the nuanced version is actually more useful.

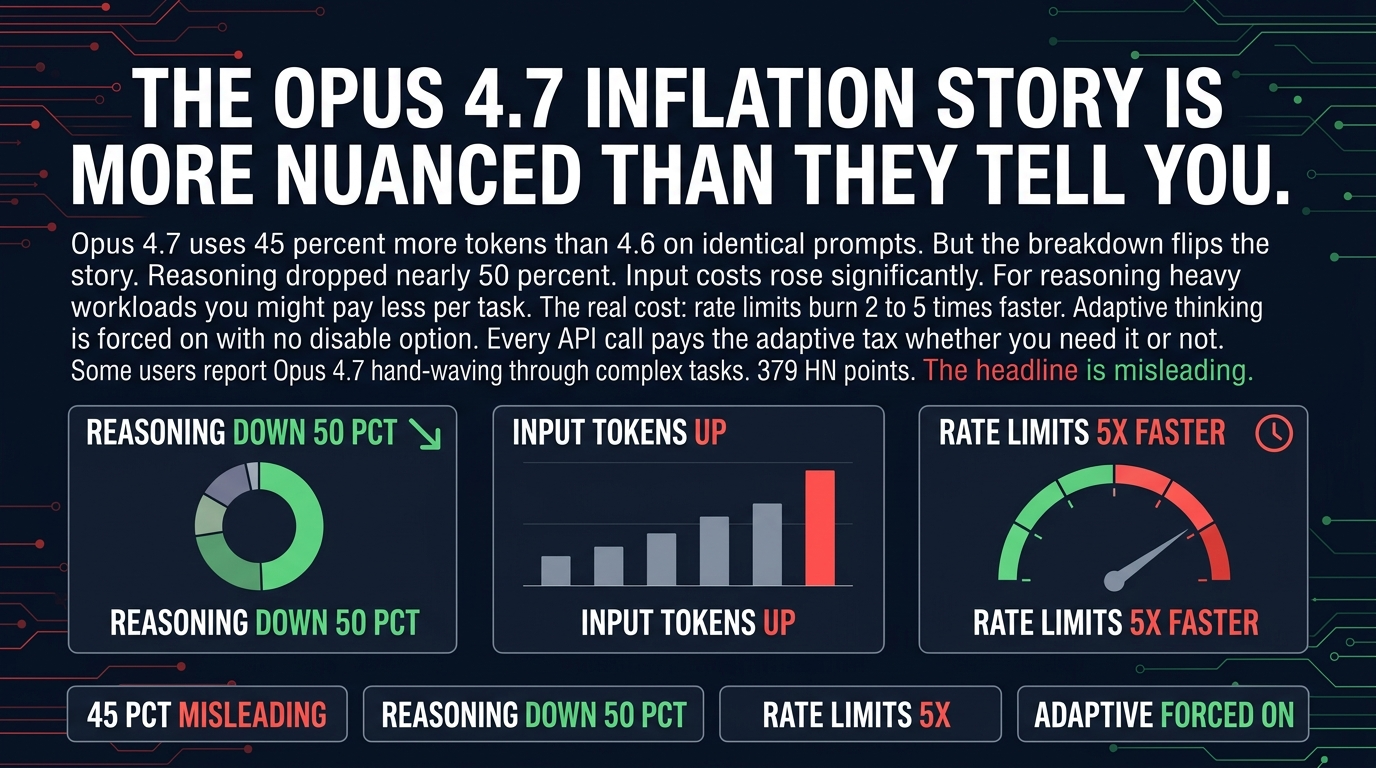

The 45% Inflation Number Is Real But Misleading

The token tracker showing 45% more usage is accurate. What it does not tell you is why. Opus 4.7 ships with adaptive thinking enabled by default. The model spends more tokens reasoning through complex problems before responding. That is the bulk of the increase.

But here is the part that matters for your cost model. The cost structure flipped. Reasoning tokens got cheaper, roughly 50% cheaper. Input tokens got more expensive. If your workload is heavy on reasoning tasks, complex code generation, multi-step analysis, anything where the model works through a problem before answering, you might actually pay less per task with 4.7 than you did with 4.6. The math depends entirely on your workload mix.

The HN thread has people arguing past each other because both sides are right. Users tracking total token count see a big increase. Users tracking actual dollar cost per completed task see something closer to parity or even a slight decrease. Neither group is wrong. They have different workloads.

The Real Problem Is Rate Limits, Not Per-Token Cost

If you are running an agency and your team shares a Claude API pool, this changes your operations. You used to be able to run a full day of work against that limit. Now the same workload blows through it before lunch. The per-task cost might be similar but the number of tasks you can run in a billing period dropped significantly. That is an effective price increase regardless of what the token math says.

Anthropic enabled adaptive thinking by default across all tiers, including API users. You cannot turn it off. Every API call pays for the model's internal monologue whether you need it or not. For simple requests that 4.6 would have handled in a few tokens, 4.7 runs a full adaptive thinking sequence. That is a hidden tax on low-complexity requests. If you are building automation that makes a lot of simple API calls, you are burning rate limit budget on thinking that was never necessary.

The Quality Issues Are Real And Worth Taking Seriously

The HN thread has a pattern of reports that the model is occasionally hand-waving through complex tasks instead of properly working through them. Small mistakes. Logical shortcuts. Things that 4.6 would have caught.

I am not going to overstate this. It is not universal. A lot of users are happy with 4.7. But the reports are consistent enough that I am paying attention to them, and you should too if you are using Opus for anything where a small error costs more than the API bill.

For production code generation, complex automation pipelines, anything where you need the model to be thorough rather than fast, the quality signal matters. A model that burns through your rate limit in two hours and cuts corners on quality is not a productivity win even if the per-token math looks good.

Opus 4.5 Is Looking Attractive Again

Sonnet 4.7 is another option worth reconsidering if cost predictability matters more to you than benchmark scores. The Claude vs Sonnet debate has been dominated by capability benchmarks for a while. For actual production operations, cost stability and predictable rate limit consumption might matter more than whatever the latest benchmark says.

One Action To Take This Week

If your team is running Opus 4.7 in production, do an audit. Pull your usage logs from the past two weeks and look at three numbers. Total tokens per conversation. Total cost per completed task. Rate limit consumption over time.

Compare those to what you were getting with 4.6. If your per-task cost is similar but you are hitting rate limits 3-5x faster, you are effectively paying more for the same output even if the token math looks neutral.

Then benchmark a sample of tasks against 4.5. Not to switch immediately, but to have real data on whether the quality issues with 4.7 are affecting your specific workload. The HN reports might not match your use case. Only your own testing will tell you.

The model got smarter at reasoning. It also got more expensive to run at scale and occasionally messier in its outputs. That is a fair trade for some workloads and the wrong trade for others. Figure out which one yours is before you renew that annual plan.

Sources: - HN discussion: https://news.ycombinator.com/item?id=47816960 - Token price comparison: https://tokens.billchambers.me/leaderboard