Your AI Dependency Is a Single Point of Failure

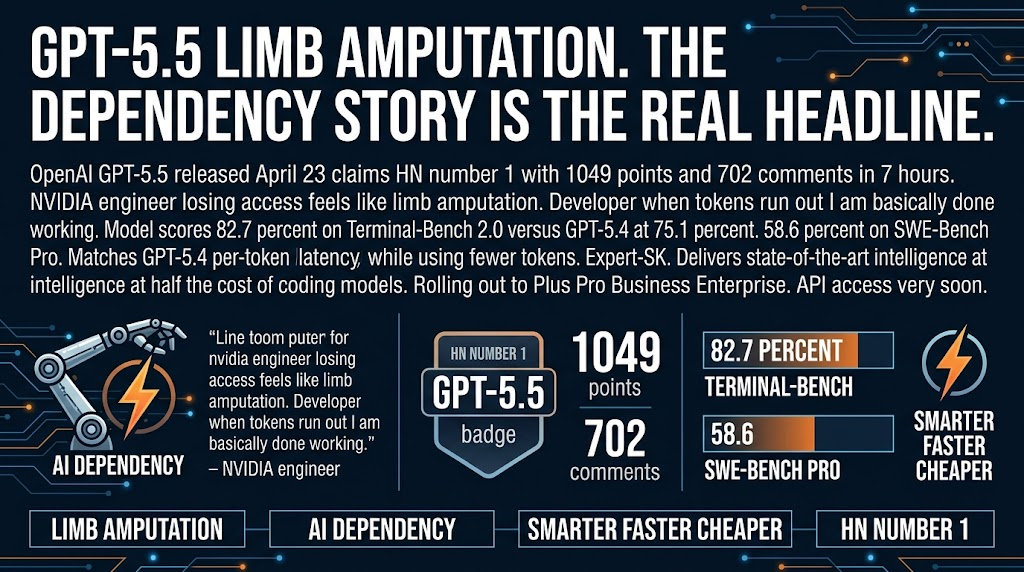

Losing access to GPT-5.5 feels like having a limb amputated. That's not a metaphor. That's a direct quote from an HN comment by an NVIDIA engineer. Another developer wrote: "When the tokens run out, I'm basically done working."

GPT-5.5 dropped this week with state-of-the-art coding benchmarks and better latency than GPT-5.4 while using fewer tokens. OpenAI is calling it their best model yet. But the top HN comment isn't about benchmarks. It's about dependency.

Here's the thing nobody wants to say out loud. We've crossed from "using a tool" to "dependent on a service" without acknowledging the distinction.

The Dependency Nobody Talks About

The NVIDIA engineer wasn't exaggerating for effect. One developer on HN wrote: "It's literally higher leverage for me to go for a walk if Claude goes down than to write code." Another said: "I don't really have the patience to write code anymore because I can one-shot it with frontier models 10x faster... When the tokens run out, I'm basically done working."

These aren't edge cases. These are the people who build the tools you use every day.

The benchmarks tell you what the model can do. They don't tell you what happens to your business when the model isn't available. When the API goes down, when the pricing changes, when the service shuts down. These tools are APIs we don't control. If your entire workflow collapses when the API goes down, that's not a workflow. That's a single point of failure you've decorated with productivity metrics.

The Wrapper Business Problem

Here's the thing that should concern every AI tooling company.

GPT-5.5 is smarter and faster and cheaper per task than the model it replaced. If OpenAI keeps closing the gap between raw model quality and what third-party tools add on top, the "better UX for AI coding" market gets squeezed hard.

Your moat can't be "we make the model easier to use" because the model is making itself easier to use. Cursor, Copilot, all the wrappers are built on the assumption that the gap between model capability and user capability is large enough to justify their existence. That gap is shrinking fast.

For small agencies building on AI tooling: what's your actual moat? If the answer is "we wrap the model in a better UX," that's not a moat. That's a feature that lives on borrowed time.

The Skill Problem Nobody Acknowledges

"When the tokens run out, I'm basically done working" is not a productivity win. It's a skill problem disguised as a productivity win. Yes, AI helps you ship faster. Yes, that's valuable. But if you can't ship without it, you've traded craftsmanship for convenience. That works until it doesn't.

The 3am scenario nobody thinks about: your AI API has an outage. Your client needs a fix. You can't write the code fast enough to meet the deadline without the AI. That's not hypothetical. That's what dependency looks like in practice.

Here's the thing. The engineers writing "limb amputation" posts are not bad developers. They're probably very good developers who got fast-tracked into AI dependency without building redundancy into their workflows. The dependency crept up on them because the productivity gains were real and immediate while the risks were abstract and future.

What You Should Actually Do

Three things, in order.

First, build redundancy into your AI workflows. Don't have a single point of failure. If you're all-in on GPT-5.5, know how to operate if it goes down. Keep a second model in your stack. Know how to work without the model for a day. Test it.

Second, maintain your underlying skills. AI should accelerate your work, not replace your ability to do the work. Use it to ship faster. But if you can't ship without it, you've traded craftsmanship for convenience that works until the API goes down or the pricing changes.

Third, audit your wrapper dependencies. If you're building client workflows on Cursor, Copilot, or any AI tool, understand what your moat actually is. If your value proposition is "we make AI easier to use," that moat is shrinking with every model release.

The dependency discussion is the real story this week. Not the benchmarks. Not the latency improvements. The fact that the best engineers in the industry are describing their AI usage in terms that sound less like tool usage and more like dependency. Build accordingly.

Sources: OpenAI | HN Discussion