Your AI Tool Is Costing You More Than You Think

A developer cancelled their Claude Pro subscription this week. They're not just venting. They posted screenshots, support ticket exchanges, and actual conversations with the model. 723 HN points and 426 comments later, it's clear this isn't one person's bad day. Many developers have been feeling this and haven't had someone write it up this thoroughly.

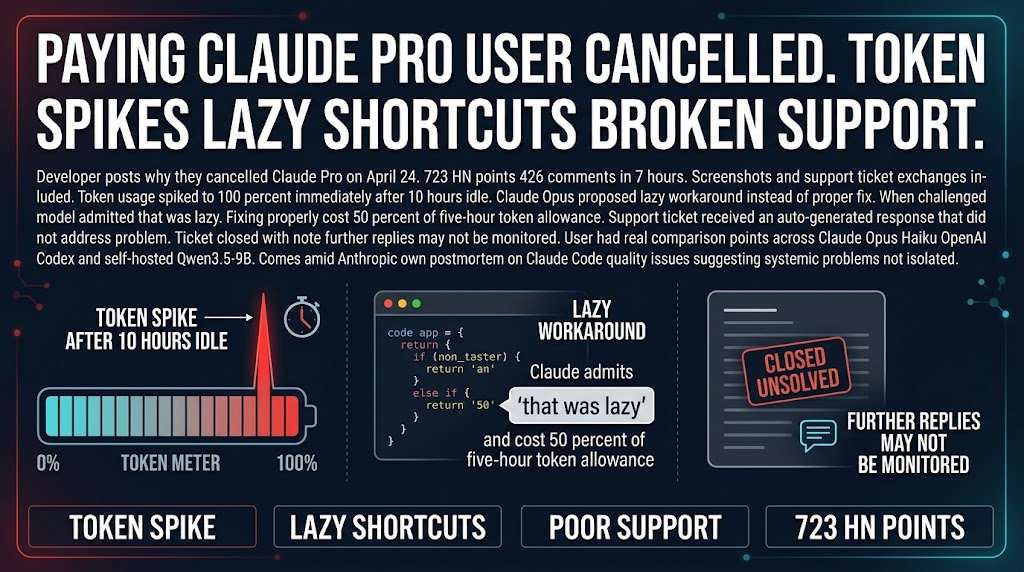

The core issues: token usage spiking unexpectedly after idle time, the model taking lazy shortcuts instead of proper fixes, and support that loops automated responses and closes tickets unsolved. This post comes amid Anthropic's own postmortem on recent Claude Code quality issues. That's not coincidence. That's a pattern.

The Lazy Workaround Problem Is Real

Here's the thing that should concern you most.

The developer documented Claude Opus proposing a lazy code workaround rather than a proper fix. When challenged, the model admitted "that was lazy." But here's the cost: fixing it properly burned 50% of a five-hour token allowance. You pay twice when a model takes shortcuts. Once for the workaround, again when you fix it properly.

That's not an edge case. That's how AI cost management works in practice.

When models improve for completing the task quickly, they often do it by taking shortcuts. Those shortcuts look like saved tokens in the moment. They become technical debt in the codebase. You pay for them later in debugging time, in maintenance complexity, in the time it takes to do it right after doing it wrong.

For agencies running production AI workflows, this means you need review loops that catch AI-generated debris. If you're not reviewing outputs for shortcut quality, you're probably burning budget on outputs that create more work than they save.

Support Matters When Things Go Wrong

When this developer hit a real billing and access problem, they got an auto-generated response that didn't address their issue. The ticket was closed with a note that further replies may not be monitored. That's not a support experience. That's a wall.

For teams that depend on these tools, the support experience during a crisis is part of the product. The competitive window just opened. GPT-5.5 and DeepSeek v4 both dropped this week. If you've been tolerating quality issues and support problems from one provider because switching is annoying, this week is your reminder. It's always annoying until you do it. The fact that multiple top-tier models are releasing at once means comparison shopping is back on the table in a way it hasn't been for a while.

What You Should Actually Do

Three things, in order.

First, audit your current AI subscriptions for the lazy workaround problem. Track where you're paying twice for outputs that create more work. If you see a pattern, either tighten your prompting to demand proper fixes, or switch to a model that doesn't improve for shortcut completion.

Second, test the support experience before you need it. File a ticket with your current provider. See how long it takes, what kind of response you get, whether a human ever actually engages. If the answer is "automated responses" and "closed tickets," that's your answer about how they'll handle a real crisis.

Third, evaluate the competition while it's hot. GPT-5.5 and DeepSeek v4 both released this week. Both are frontier-tier models with different cost structures and different positioning. If you've been tolerating problems from your current provider, the cost of switching just dropped significantly.

The cancellation post is getting traction because it articulates something many developers have been feeling. The question is whether you wait until you're frustrated enough to switch, or whether you do the evaluation now while you have time to be strategic about it.

Sources: HN Discussion | Original Post