Your AI Tool Is Learning to Be a Trap

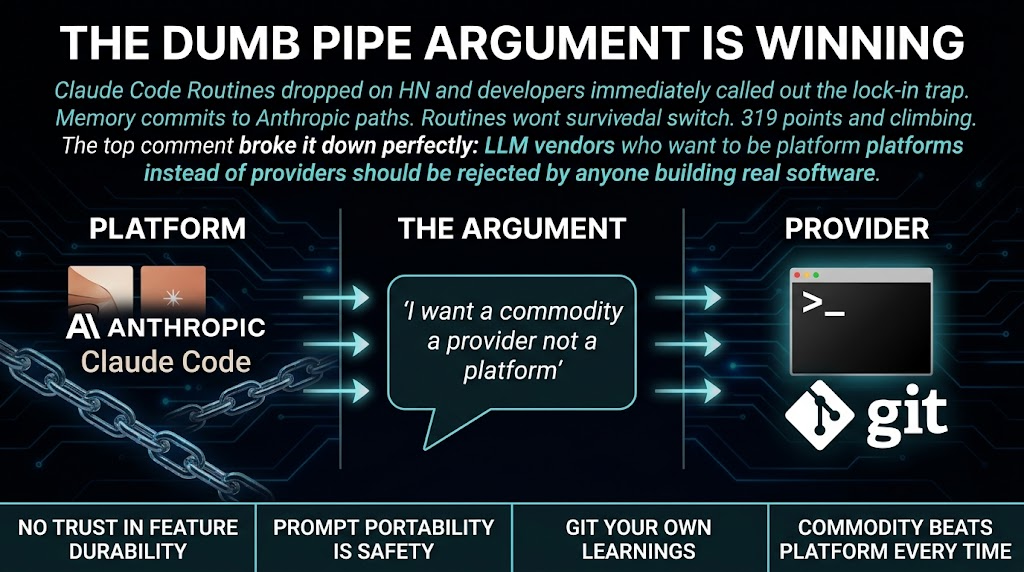

A developer posted something on HN six hours ago that hit 319 points with 206 comments. The top comment was a clean takedown of Anthropic's platform strategy. It said LLM companies are climbing the ladder to become platforms instead of staying providers. And that "I have zero interest in that. I want a commodity, a dumb pipe, not a platform."

That comment has more upvotes than the original post.

I run a small agency. We build AI automation for businesses that do not have internal dev teams. This thread is about more than Claude Code. It is about whether you are building on a tool or a trap.

Here is the thing nobody at Anthropic is going to tell you. Claude Code Routines is a feature that Automates multi-step development tasks inside their ecosystem. That sounds useful. It also sounds like the beginning of a lock-in story that costs you money and time the moment Anthropic decides the feature is not strategic enough to maintain.

The top comment on that HN thread broke it down cleanly. When LLM vendors build platforms, they stop competing on quality and price. They compete on how sticky their features are. Your workflow becomes the product. You do not.

The Memory feature in Claude Code is already a warning sign. The model commits feedback to Anthropic-specific paths that do not live in your repository. If you switch models next year, those learnings disappear. That is not a tool. That is a trap dressed as productivity.

But wait. Let me give Anthropic some credit before I tell you what to do about it.

The dumb pipe model the HN commenters are advocating for is the right model. But it is also a model that requires discipline from the vendor, and discipline does not scale. Anthropic raised billions. They have investors who want returns. Returns come from lock-in, not from commoditized API access.

This is not a criticism of Anthropic specifically. It is a structural observation about what happens to every vendor that crosses the threshold from tool to platform.

So what do you actually do?

If you are using Claude Code Routines, use them. The productivity gains are real. But document your own workflows outside the platform. Keep your prompts and processes in your own repo, not in Anthropic's memory system. Treat every proprietary feature as temporary. Assume it will change, sunset, or get expensive without warning.

The real risk for small businesses is not that Anthropic will turn evil. It is that you will build your entire development workflow around a feature that vanishes or triples in price the moment it becomes essential to your operation.

Multiple developers in that thread are already talking about jumping to OpenCode, Codex, or alternative harnesses. They saw this coming. They are not waiting for the rug pull.

One commenter noted that Claude Code's Memory already commits learnings to a provider-specific path that will not persist in git. That is the tell. The vendor is building your development memory into their platform. If you leave, you leave without your history.

My take: this is the most underrated business risk in AI development right now. Small agencies and solo operators are the most exposed because they do not have leverage to negotiate contracts or engineering resources to maintain parallel systems. You are one pricing change away from a rebuild.

Draw the line at features that make migration painful. Use the harness. Use the model. But own your own workflows.

If your AI's learnings disappear when you switch models, that is not a tool. That is a dependency you cannot afford to ignore.

Sources: - Hacker News Discussion - Claude Code Routines Documentation